Projects

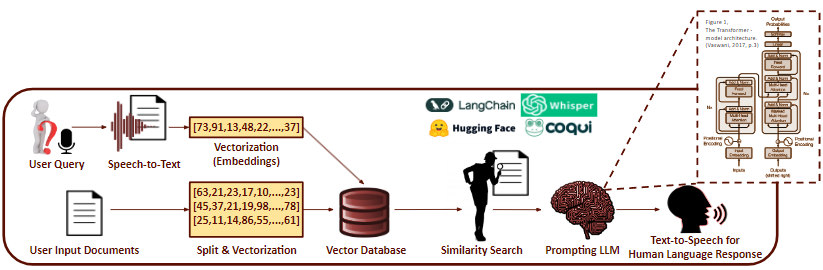

Q&A Chatbot via Retrieval Augmented Generation

Built a RAG-based Q&A system to mitigate LLM hallucination. Users upload PDF documents; the pipeline vectorizes them into a grounded database and performs semantic search to retrieve relevant context before generating responses with an LLM.

Pilot Workload Estimation via Multimodal ML

Multimodal ML model estimating eVTOL pilot stress levels from 7 biometric modalities — heart rate, eye gaze, GSR, brain activity (fNIR), body pose, grip force, and response time. Ground truth collected via NASA Task Load Index (TLX).

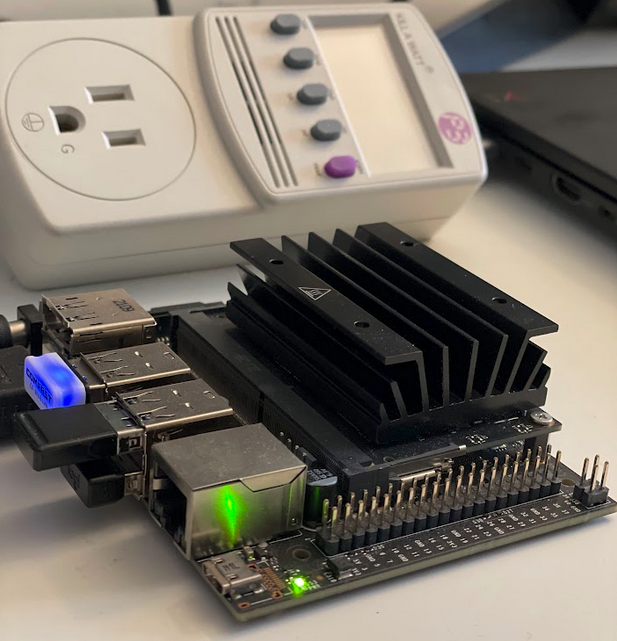

Large Generative Model On-Device Deployment & Optimization

Compressed a 72M-parameter virtual garment try-on model onto NVIDIA Jetson Nano 4GB via quantization, structured/unstructured pruning, and knowledge distillation. Conducted filter-wise sensitivity analysis to identify key-player filters.

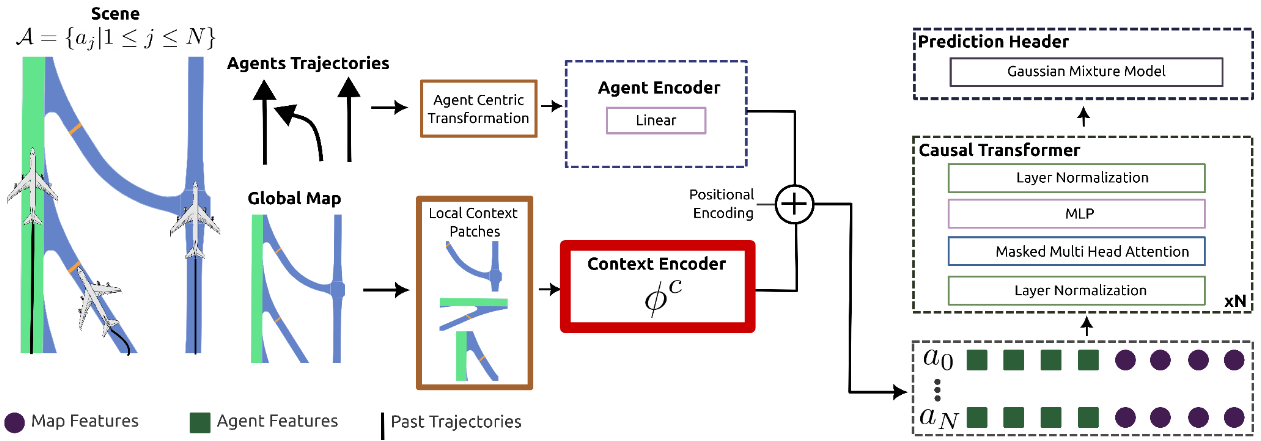

Motion Prediction in Airports via Heterogeneous Map Representations

Studied rasterized vs. graph-based airport map representations for aircraft motion forecasting using a transformer-based multi-modal joint prediction model (GPT-2 encoder + GMM header). Trained on 200 days of FAA SWIM trajectory data.

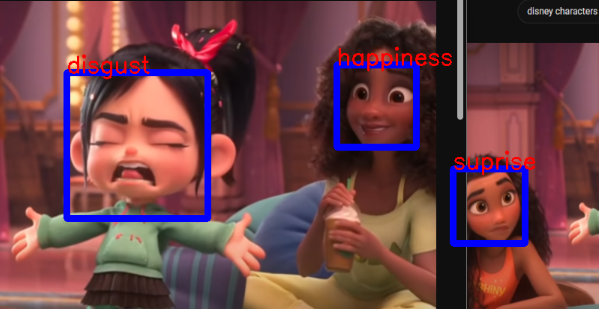

Human Facial Emotion Recognition & Classification

Trained a CNN classifier on the AffectNet benchmark (291K images, 8 emotion labels) to recognize facial expressions. Achieved ~70% validation accuracy. Analyzed class imbalance via confusion matrix, precision, recall, and F1 scores.